They say a picture is worth a thousand words, but it gets a little tough to fit a thousand words in a search box, and that’s where visual search comes to your rescue.

Visual search technology is on the rise. It saves people from writing painstakingly long and complicated queries into the search box. One does not have to wonder if they picked the right keywords when satisfactory results don’t show up.

Visual search in its essence is the ability to enter a search query with the help of images rather than text.

The integration of visual search into search is taking us a step closer to gaining more knowledge. Imagine being able to find out what breed of dog you saw playing in the park or what bug you saw crawling or what the signboard says in a foreign country just with a click.

“Being able to search the world around you is the next logical step”

— Brian Rakowski, VP Product Management, Google.

Various domains have realized the importance of visual search and here are some of the domains and the companies in those domains integrating visual search in their businesses.

Search Engines

As more industries are realizing the dawn of a new era that visual search will bring with it, we can see more companies investing in this technology that is still in its development stage.

Search engines were the first to take notice. Which makes sense since visual search has the power to revolutionize the way we search for things. Now instead of typing lengthy sentences into the search bar you can just scan the item and receive product description and if available, where you can buy it and products similar to it.

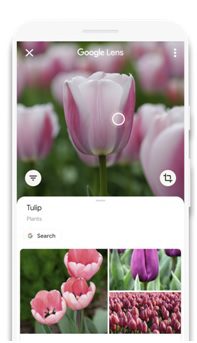

Google Lens

On Oct 4, 2017, Google launched its own visual search tool called Google Lens.

One could say google lens is google search but with the image as a query instead of text, but google lens has numerous benefits that set it apart from image and reverse image search.

Google mixed its computer vision and AI technology to create a potent mix that is a lens. It then integrated this into their Google assistant to provide it’s users another tier of seamless use.

In their demonstration of the lens, they showed how with their combined power people can not only know ratings and reviews of a restaurant but also book a reservation there, just by clicking a photo of the place with a Google lens.

- Google lens would also go as far as to pick out a dish for you when directed at a menu card. This is done by accumulating the ratings given by people and the images they uploaded of it.

- You can also scan text to translate it or copy it. Or scan a number to call it.

- If an item of clothing or object catches your eye you can find products similar to it and where to find them.

- Pointing your lens towards a building or monument will give you information such as hours of operation, facts, and more.

- Species and information about plants and animals can also be found by clicking their pic.

- You can find summaries and reviews of a book by scanning the cover.

Google Lens can be used through the main Lens app, google assistant, google photos, and the Google app.

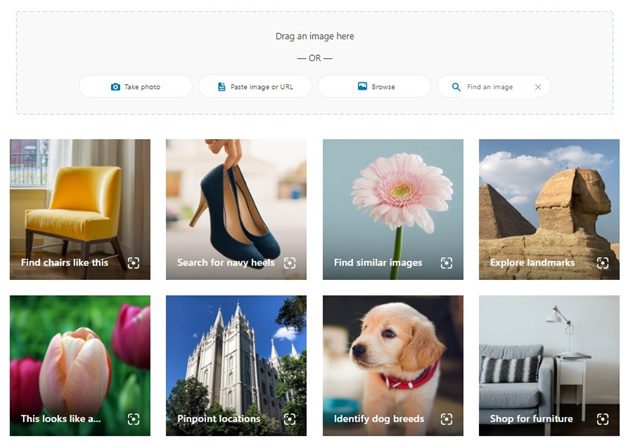

Bing Visual Search (Microsoft)

In June of 2018, Microsoft released a visual search for its search engine Bing with features akin to that of Google lens.

Bing visual search has its own standalone app and website, but apart from that Windows users can use the visual search feature right from their desktop. Released in Dec 2019, this feature will allow users to paste the screenshot of the image they wish to run a visual search on in the windows search bar. As of now, this feature is only available in the US. It will hit the international market soon.

One can run a visual search on an image by clicking a photo, pasting the URL of the image, browsing in files, and looking up the image online.

So what can you do with Bing’s visual search tool?

- Find name, scientific name, different varieties, and similar flora by clicking a or uploading a flower.

- Find similar looking images from Scandid and Adobe Stock Photos and well, all over the web

- Learn about the breed, characteristics, and similar breeds of a dog by uploading its pic

- Wondering which celeb you look like? Bing can tell you your celebrity doppelgangers.

- You can scan a text to search it or copy-paste it.

- Got the name of a celebrity at the tip of your tongue? Bing got you covered. It’ll help you identify celebrities, politicians, and historical figures.

- Powered by The Metropolitan Museum of Art (The Met) Open Access program you can find similar looking art on bing visual search

- Find landmarks and nearby places by taking their photo

Microsoft also offers custom visual search for commerce where retailers can use this service to sell to their audience. Custom visual search is paired with “Intelligent Product Search” and “Personalization and Product Recommendations.”

Social Media

The most image-centric apps such as Pinterest and Snapchat benefit a great deal with the ascend of visual search technology

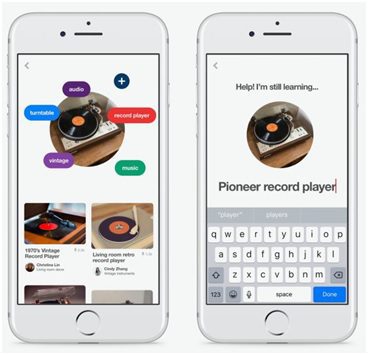

Pinterest Lens

It’s no surprise that Pinterest was the first one to incorporate visual search into their app since the whole website runs on images(and powerful image algorithm).

Pinterest, known for being an image-based social network first released its visual search engine known as the Pinterest lens in Feb of 2017. It takes images from the Pinterest camera, pins, and gallery as a query and shows the result of similar pins on the grounds of similar products or styles.

Users could show the lens a carrot to see recipes with carrot and filter them on the basis of vegetarian, vegan, or gluten-free or take a picture of a skirt to put together an outfit as Pinterest will show similar skirts and what others were seen pairing it with.

Pinterest also features a “pinch to zoom” feature in its camera and it also allows you to pick photos from phone galleries, pins, and of course live scenes to run a visual search on them.

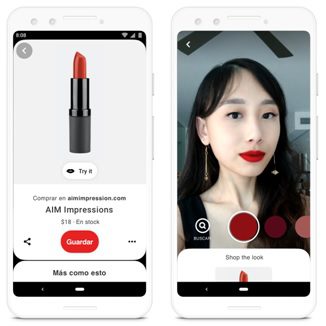

At its core, the Pinterest lens will show you images and suggestions “related” to the pic. One can say that it’s finding similar pins, just with visual search. Pinterest would mostly show results visible on their platform accompanied by some images from other websites but tagged on Pinterest. All this was until the launch of “Try on”.

Pinterest launched the new “try on” feature in January of 2020 which marked its debut with lipsticks. This will allow Pinterest users to pick a shade of lipstick from numerous prominent brands, such as Sephora, Neutrogena, NYX Professional Makeup, Estée Lauder, and Lancôme among others. The users get to try them on live and are redirected to their website should they wish to buy it.

“Try on” stands on the foundation laid by the Pinterest lens and, while this feature itself isn’t all that new in the market, Pinterest shares what sets it apart-

“We believe in celebrating you, and so our AR won’t be augmenting your reality, but rather helping you to make happy and real purchases for your life.”

Pinterest also announced its partnership with Target which would allow its users to snap a photo of any product and find items similar to it on Target.

Snapchat

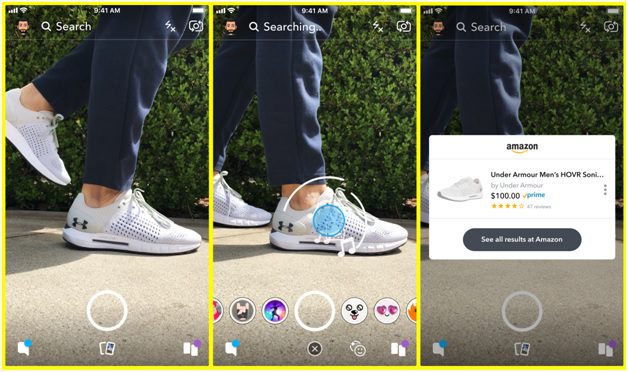

Apart from applying fun filters on your face and finding out which song is playing (through Shazam), you can now also shop on amazon by long-tapping the screen.

Amazon and Snapchat paired up in Sept of 2018, which would allow users to see and buy products via the latter’s app.

For running a visual search on an item, one has to point their camera towards and long-press the screen. Snapchat will then try to figure out whether the user is trying to detect a song or scanning an item or bar code. On running a visual search on the desired product, an Amazon card will appear on the app screen containing the title, image thumbnail, average rating, seller, and prime availability. One will be redirected to the amazon app or website upon tapping on the pop-up card.

On its initial launch, it was only available to a small part of the US but they’ll be rolling it out slowly everywhere else.

Application Developers

With the advent of visual search technology, many have developed their own applications according to their own needs while others chose to develop them for those who choose to outsource them.

eBay

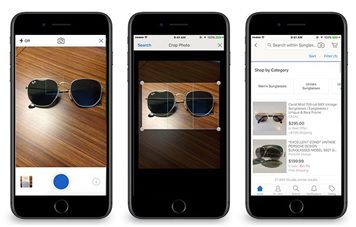

The multinational e-commerce corporation eBay has its own visual search system in place. Their in-house developed AI allows their users to put the text as well as images into the search bar. These images can be from an in-app camera or camera roll or of one of the products on their app or even from another app or website.

The beginning of image search on their platform was announced in July of 2017 when they released two features namely- Find It On eBay and Image Search. With an image search, users could find products by clicking or uploading images from the camera roll.

Image search on eBay (Source)

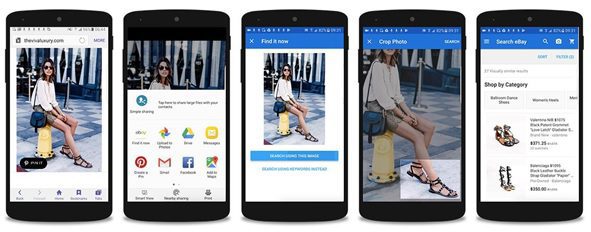

Find It On eBay on the other hand lets users “share” images from third-party websites. For instance, if you found some item that you liked on an online magazine, you send that image to the eBay app and it will find that item or the ones with the closest resemblance.

The image processing on both these features was done with the help of a deep learning model called a convolutional neural network which would compare the uploaded images to the ones uploaded on eBay. These features went live in Oct 2017.

Find It On eBay (Source)

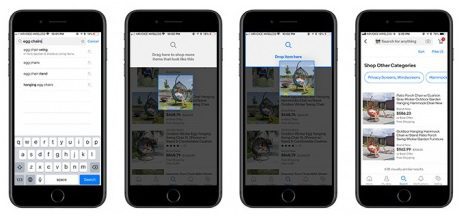

They further updated this feature in 2018 when they released a drag-and-drop feature on their app which would allow users to drag images that they find on the app itself and drop them in the search box.

Drag-and-drop feature (Source)

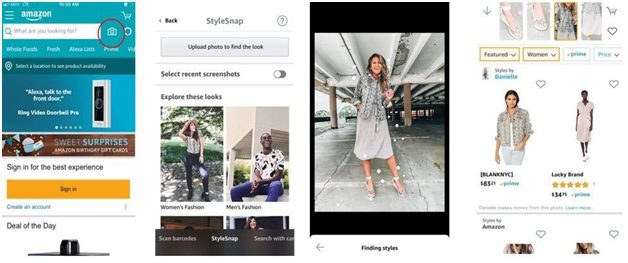

Amazon (StyleSnap)

According to an Amazon Scout, Visual Search is perfect for shoppers who face two common dilemmas: “I don’t know what I want, but I’ll know it when I see it” and “I know what I want, but I don’t know what it’s called.”

Amazon in June of 2019 released its visual search shopping feature called StyleSnap. Instead of being a standalone app, StyleSnap will be integrated into the Amazon shopping app.

Customers who wish to run a visual search on an image can select the camera icon on the Amazon shopping app and find clothing items related similar to the ones in the image.

Developed by using half part computer vision and half part of deep learning, amazon explains how it uses artificial neural networks to develop its visual search algorithm. They explain how neural networks are made up of millions of artificial neurons connected to each other and can be “trained” to detect images of outfits by feeding it a series of images.”

The new Amazon Fashion tool is currently available in the UK and Germany. It works with dresses, tops, bottoms, shoes, and bags in the womenswear and menswear categories.

But style snap isn’t the first attempt by amazon on retail shopping with visual search. Amazon launched Echo Look in 2017 with an AI-powered camera. This was basically an Amazon Echo combined with a visual search powered camera. Along with all the features that “Alexa” had to offer, you could-

- Give amazon’s Alexa a voice command to take your image or a six-second long video

- Ask Alexa “how do I look?” for advice based on weather, occasion, and current styling trends.

- Share captured images with friends.

- Blur background on images.

But it failed to make a dent since its release and is now discontinued.

Slyce

This Toronto (Canada) based company started its journey in 2012 as a business consulting firm. From that, they ventured into developing image recognition technology. The company that is often referred to as “Shazam” for shopping provides its services to various big name brands such as Neiman Marcus, Dior, The Home Depot, Tommy Hilfiger, Bed Bath & Beyond, and Macy’s among others.

Their apps allow shoppers to scan 1D 2D and 3D images. That is they can scan images from magazines or scan barcodes or click images of an item of clothing.

Their services allow brands to launch their visual search apps. Within the app, users can click pictures of items such as clothes or furniture and the app will show the closest matching results that they can buy from respective stores.

According to the company, today’s users hold the power of DSLR in their palms and this has the power to change the way people discover, interact, and shop for products.

Syte

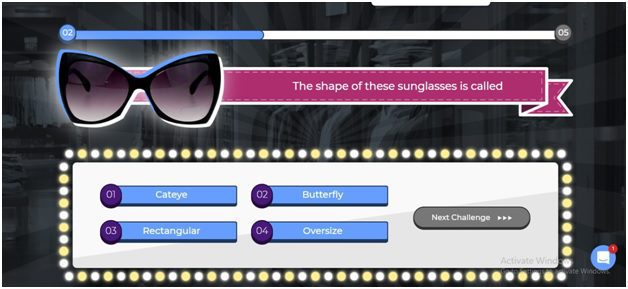

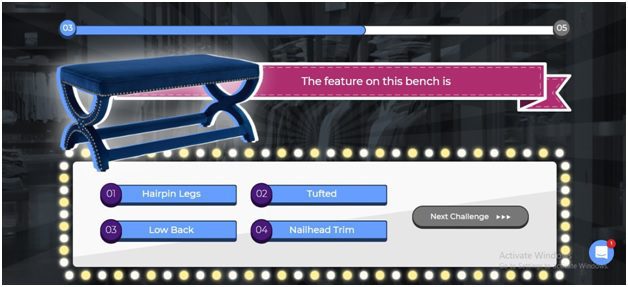

When you open Syte’s website they offer a quiz that compares your knowledge to that of their AI.

And indeed when you take the quiz you realize that there are many things that you just don’t know the names of. Such as-

Or

How would someone look up this bench or sunglasses if they do not know the name of their make type? Syte drives that point in and makes one realize that visual search can make searching of articles way easier.

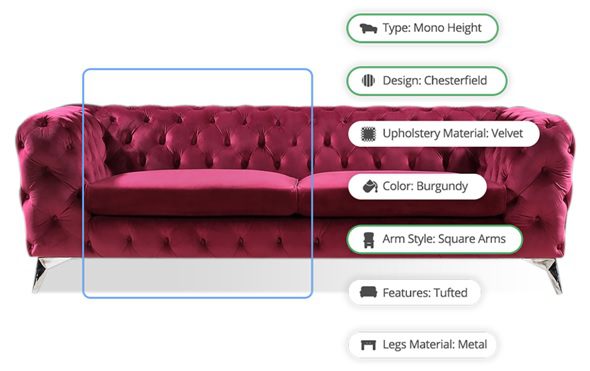

Their visual AI not only allows buyers to shop by snapping pictures but also shows clothing and accessories that go with it.

Their AI also performs “deep tagging” which adds numerous tags to an item based on its color, type, height, design, etc. This further helps their AI in providing the most accurate results.

But they don’t just stop at online visual searches via apps. They also extend their services to “brick-and-mortar” stores with the help of AI-powered in-store Smart Mirror

The Israeli startup flaunts many brand names on their page as their customers. Some of them include Samsung, Prada, Kohl’s, BooHoo, and Sainsbury’s.

Wayfair

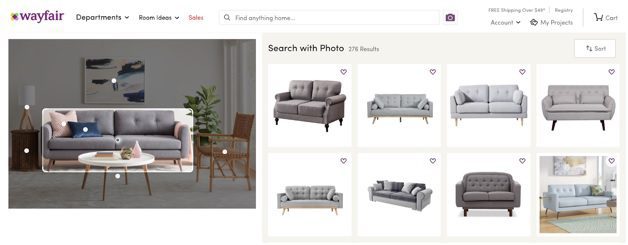

Wayfair operates entirely online and sells furniture and home decor goods. The company has headquarters in Boston Massachusetts with warehouses throughout the U.S. along with Canada, Germany, Ireland, and the UK.

Their shoppers can take photos of the items they like and find similar products with the help of visual search through both the app and the website.

The company has a dedicated team that develops its visual search algorithm but they also frequently showcase their technology at conferences.

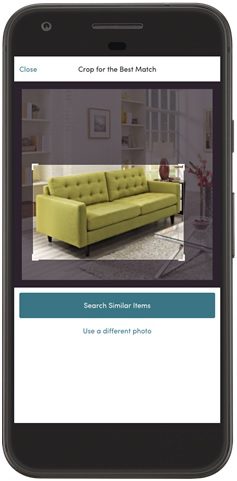

While the earlier versions of the technology required the users to crop around the area of the object for best results.

But the later iteration of the app detects objects on its own and pre-selects cropped area once the image is uploaded

Once the item has been selected it shows variations of said item.

But their team didn’t just stop at that. They created a Visual Recommendations algorithm that shows items that are visually similar to the one’s customer has already viewed. They incorporate the customer’s style preferences into their recommendations. It has been developed to streamline users’ shopping process.

Autonomous Vehicles

Autonomous vehicle companies have been high relying on cameras to find the objectives in the environment. The vehicles usually have cameras attached on all, front, back, left, and right so that a 360-degree view can be created of the environment around them.

Others opt for fisheye cameras who’s superwide lens provides a panoramic view.

The images captured by the cameras are processed and that’s where the visual recognition comes into play.

That’s why visual recognition plays a major role in autonomous vehicles.

An example of it is Nuro.

Nuro is one such autonomous vehicle company that makes driverless delivery vehicles.

Their team consists of veterans from fields such as robotics, consumer electronics, autonomous vehicles, and automotive

Conclusion

Recently there has been some patent activity in the field of visual search by its developers. (You can read the ones by Syte and Slyce and Nuro)

And if the patents are any indications, we can safely assume that there will be more hustle-bustle in the visual search field. And why wouldn’t there be, it gives you the power to search the world around you, and also shop it 🙂

We may see many more industries embracing this technology as it grows and develops more, but till then let’s enjoy the fact that we now have the power to click pictures of any shoes and buy them 🙂